LatSearch: Latent Reward-Guided Search for Faster Inference-Time Scaling in Video Diffusion

TL;DR

We introduce LatSearch, an inference-time scaling method for video diffusion that uses a latent reward model to evaluate partially denoised latents during generation. By providing intermediate reward guidance along the denoising trajectory, LatSearch enables efficient reward-guided resampling and pruning, improving video quality while reducing runtime by up to 79% compared to existing search-based approaches.

Abstract

The recent success of inference-time scaling in large language models has inspired similar explorations in video diffusion. In particular, motivated by the existence of "golden noise" that enhances video quality, prior work has attempted to improve inference by optimising or searching for better initial noise. However, these approaches have notable limitations: they either rely on priors imposed at the beginning of noise sampling or on rewards evaluated only on the denoised and decoded videos. This leads to error accumulation, delayed and sparse reward signals, and prohibitive computational cost, which prevents the use of stronger search algorithms. Crucially, stronger search algorithms are precisely what could unlock substantial gains in controllability, sample efficiency and generation quality for video diffusion, provided their computational cost can be reduced.

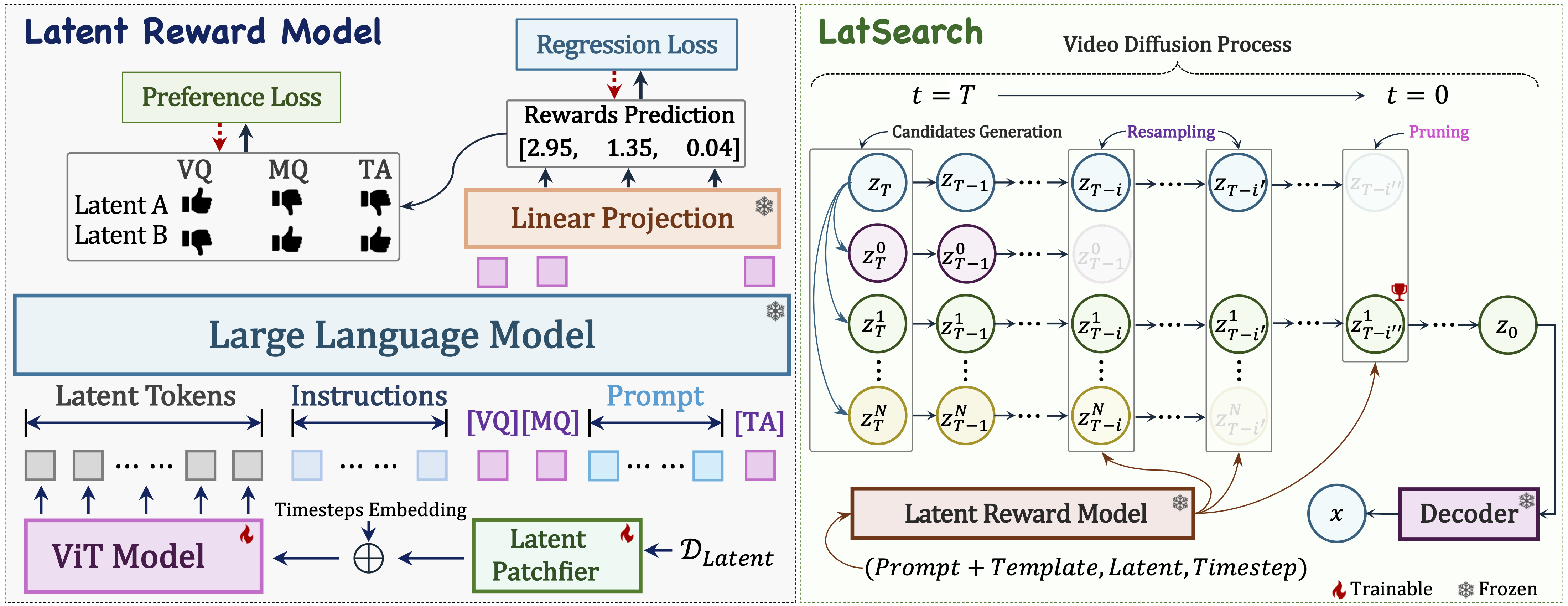

To fill in this gap, we enable efficient inference-time scaling for video diffusion through latent reward guidance, which provides intermediate, informative and efficient feedback along the denoising trajectory. We introduce a latent reward model that scores partially denoised latents at arbitrary timesteps with respect to visual quality, motion quality, and text alignment. Building on this model, we propose LatSearch, a novel inference-time search mechanism that performs Reward-Guided Resampling and Pruning (RGRP). In the resampling stage, candidates are sampled according to reward-normalised probabilities to reduce over-reliance on the reward model. In the pruning stage, applied at the final scheduled step, only the candidate with the highest cumulative reward is retained, improving both quality and efficiency. We evaluate LatSearch on the VBench-2.0 benchmark and demonstrate that it consistently improves video generation across multiple evaluation dimensions compared to the baseline Wan2.1 model. Compared with the state-of-the-art, our approach achieves comparable or better quality while reducing runtime by up to 79%.

Method

Our method consists of two components: a latent reward model and a latent reward-guided inference-time search algorithm, LatSearch. The latent reward model evaluates partially denoised latents at arbitrary timesteps, predicting scores for visual quality, motion quality, and text alignment using a multimodal architecture that integrates a ViT encoder with a large language model. Based on these intermediate rewards, LatSearch maintains multiple candidate diffusion trajectories and periodically performs reward-guided resampling to explore promising latent states. At the final stage, candidates are pruned according to cumulative rewards, producing the final video with improved quality and efficiency.

Video Results Comparison

Comparison between Baseline and our proposed LatSearch.

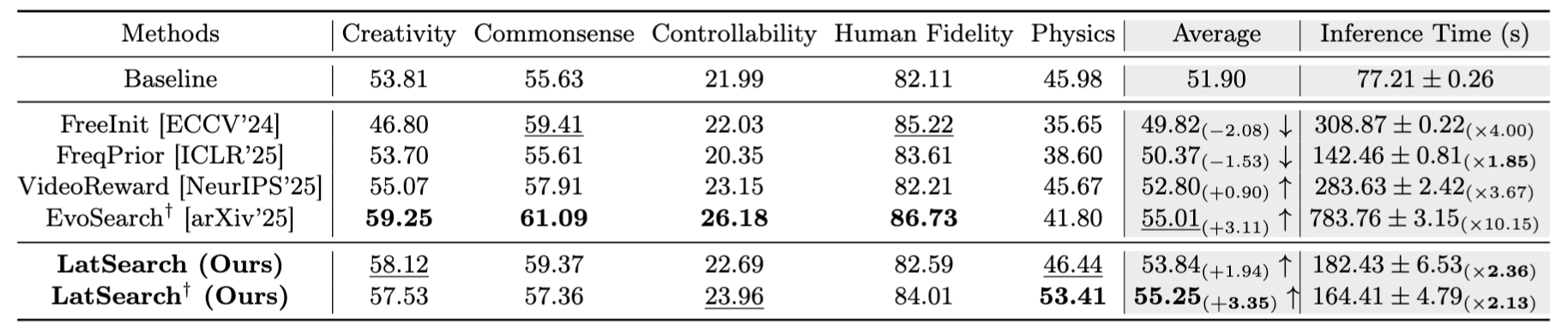

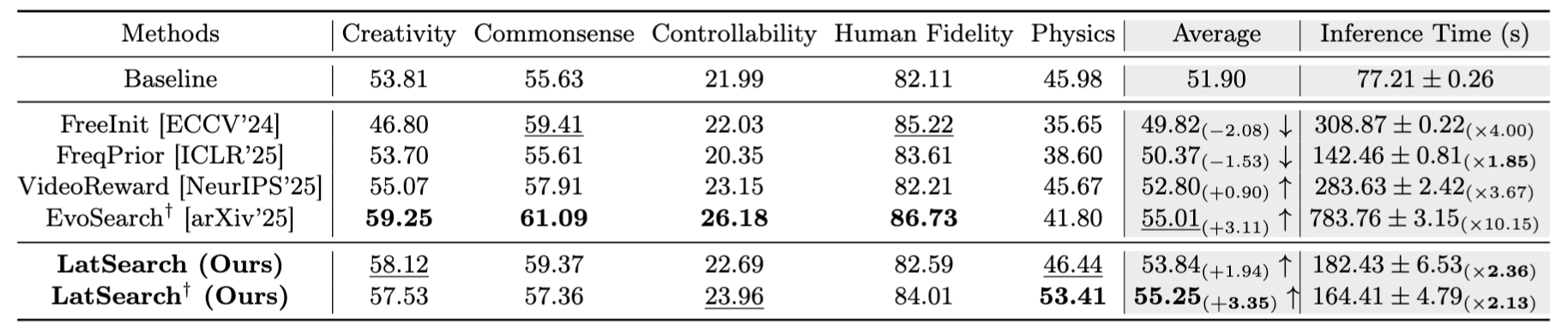

Quantitative Results

BibTeX

Contact

For any questions, please contact zengqun dot zhao at qmul dot ac dot uk.